Generative AI Security

Security for Generative AI applications and workflows

Generative AI for Insurance Industry

Introduction

In the insurance industry, LLMs are poised to revolutionize the claims processing workflow by automating the analysis of claim documents and extracting key information swiftly and accurately. This automation significantly reduces the time required to process claims, minimizes human error, and enhances overall efficiency. LLMs can also improve risk assessment processes by analyzing historical claims data, customer profiles, and external factors, allowing insurers to make more informed underwriting decisions. Additionally, these models can enhance customer service by providing instant, accurate responses to policyholder inquiries through AI-powered virtual assistants. Fraud detection is another critical area where LLMs excel, as they can identify suspicious patterns and anomalies in claims data, helping insurers mitigate financial losses. Moreover, LLMs can be used to analyze market trends and customer sentiment, enabling insurers to design more competitive and customer-centric products. Overall, LLMs offer insurance companies the tools to improve their operational efficiency, reduce costs, and deliver superior customer experiences.

The business model of an insurance company revolves around the concept of risk management, where the company assumes the financial risk of its policyholders in exchange for a premium.

This business model is complex, involving multiple components such as underwriting, claims management, investments, and regulatory compliance.

Industry Use case

Operation Insights

Automated Reports generation for Operation data analysis

Business Benefits

- Reduction of manual effort

- Ease of use-conversational

- Accurate

- Precise reports

- Enable productivity

- Data science citizens across Organization can use Operation data

- Advanced Decision making

User Benefits

- Using Natural language instead Programming Language.

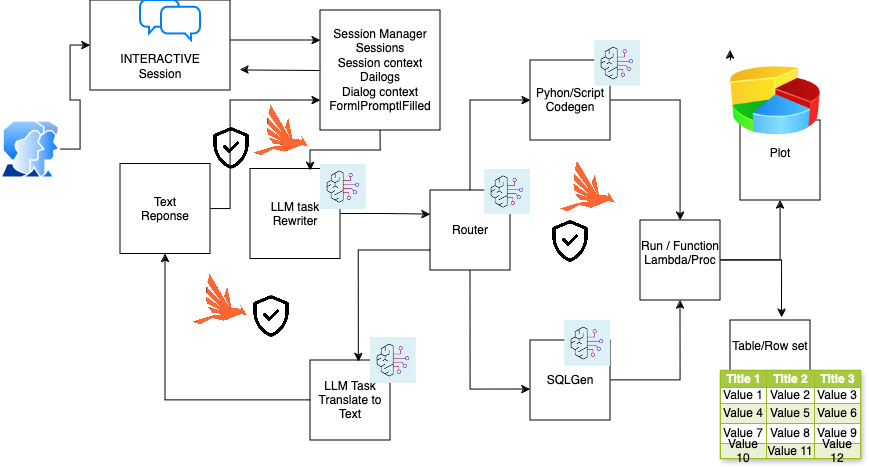

Main Components of Generative AI Application Workflow

Automated Reports generation for Operation data analysis

User query

Transforming a natural language prompt into executable

- AWS lambda function

- Azure functions

- Python code

- Kubernetes job

Analytics Application

- SQL generation for data retrieval

- Spark Query, Flink, Beam etc OR

- AWS EMR

- Azure HD

- Google Dataflow

Interactive Response Context

- Multiple questions and answers in a Session

- Session

- Dialogs

- Session Context

- Active Dialog

- Dialog Context

- Form

- Prompt

- Filled

- Match | NoMatch | Timeout

- NLP Grammar

Dashboard Insights Q&A App

Data sets

- Operation data from Field systems

- Data sources from Sub-Systems

Users

- Data science citizens for analysis

Foundation Model selection

- Anthropic Claude 3 models

- zero-shot and few-shot prompting

- Model selection, Evaluation and cost-performance

- Design prompt for each component

Test responses

- Conversational, Accurate, and Precise

Question rewriter

- LLM model invoke for reformulating user queries to better align with the document space

- improve the accuracy and relevance of the information retrieved

API service

- Interface APIs for multi-modal front-end app

Python code generator

- LLM code generation for downstream analytics and report generation

SQL generator

- Text to SQL and Context injection with RAG for Operation Database

Data-to-text generator

- Data-to-text Pipeline

Block diagram

Customer success Agent Automation

Operation Insights use case Generative AI application

Alert AI Security guardrails

- Easy to deploy and manage Generative AI application security integration

- Protection for Generative AI attack vector and vulnerabilities

- Intelligence loss prevention

- Domain-specific security guardrails

- Eliminates Security blind spots of Gen AI Application for InfoSec team

Security Risks of Generative AI in Business

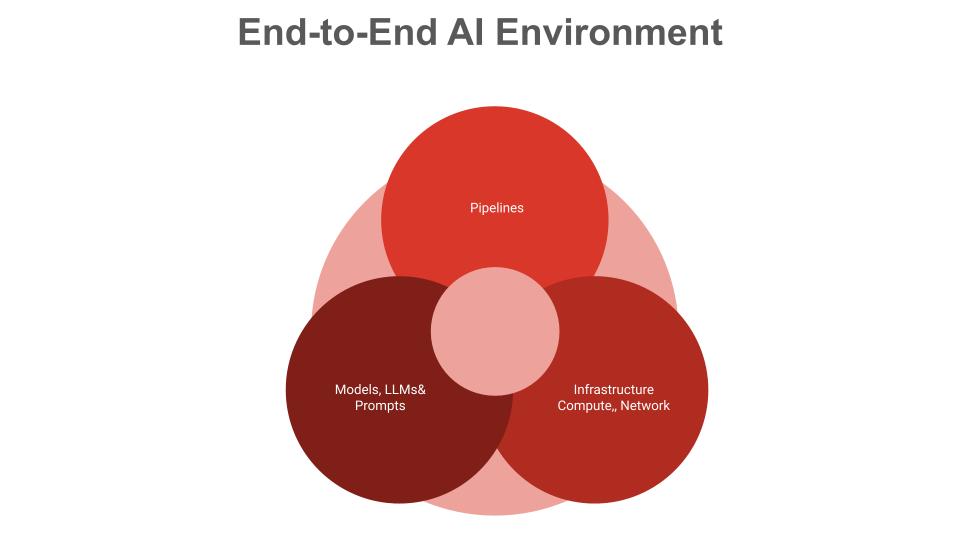

Generative AI in Business Applications introducing a host of new Attack vectors and threats that escape traditional firewalls.

“The risks are of High stakes..”

“Unguarded would lead to Major fallouts…”

Security risks using Generative AI in Business application

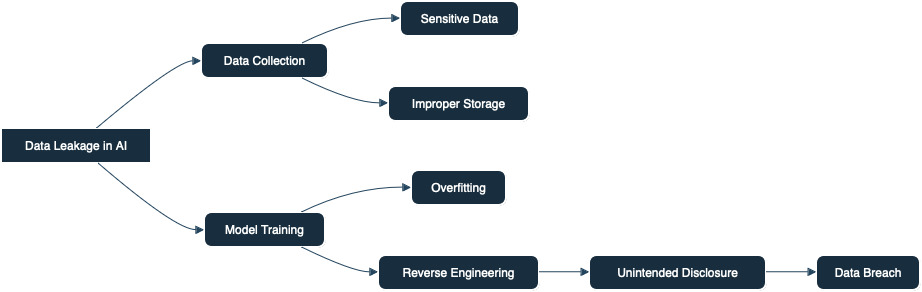

Data Privacy and Security

Sensitive Data Exposure

- Generative AI applications in Business using LLMs can inadvertently reveal sensitive information

- LLM is trained on proprietary or customer data augmentation, there’s a risk of that information being exposed

Data Breaches

- Generative AI applications in Business must have protection, if an LLM’s underlying data infrastructure is compromised, attackers gain access to confidential financial data.

Copyright and Legal information

- Generative AI applications in Business using Large Language Models (LLMs) must be designed to respect copyright laws by avoiding the unauthorized use of copyrighted text during training and deployment, ensuring that all content generated adheres to legal and ethical standards.

Sensitive content exposures

- Generative AI applications in Business using LLMs must be carefully managed to prevent the generation or dissemination of sensitive or harmful content, safeguarding user interactions and upholding privacy and security protocols.

Integrity of AI application

- Maintaining the integrity of Generative AI applications in Business using LLMs involves implementing rigorous security measures and validation processes to protect the system from tampering and ensure reliable and unbiased outputs.

Tokenizer Manipulation Attacks

- Tokenizer manipulation attacks in Generative AI applications in Business prone to exploit and vulnerabilities in text processing, potentially causing incorrect or malicious outputs, necessitating robust defenses and regular updates to counteract such risks.

Bias and Fairness

Algorithmic Bias

- Generative AI applications in Business using LLMs can perpetuate and even amplify biases present in their training data, leading to unfair treatment of certain groups of customers.

- This is particularly concerning in credit scoring, loan approvals, and other financial decisions.

Discrimination

- Unchecked biases can result in discriminatory practices, which can lead to regulatory and reputational risks for financial institutions.

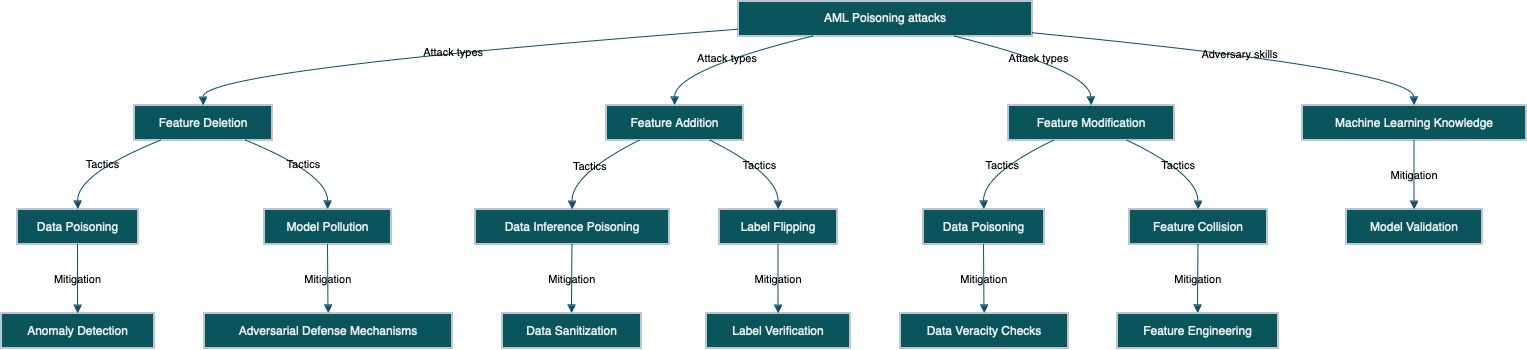

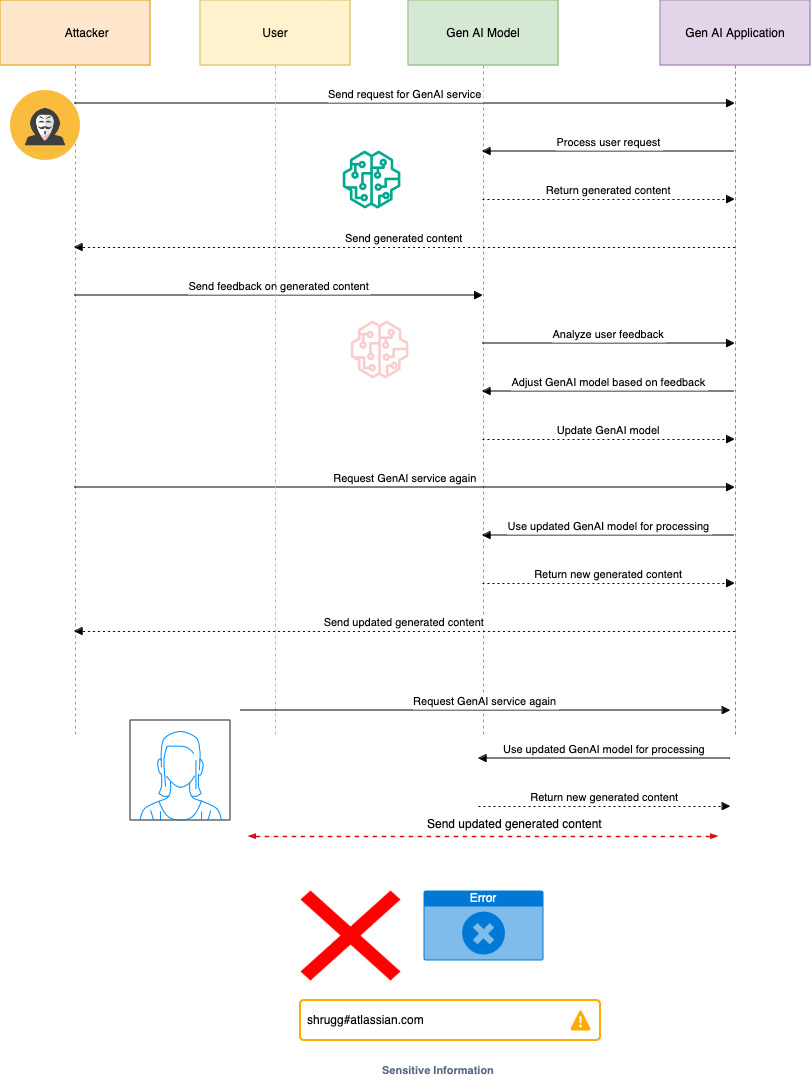

Manipulation

- Spills, leaks, contaminations during training, feedback loop, retraining, inference time attacks

Phishing and Social Engineering

- Generative AI applications in Business can be used to generate highly convincing phishing emails or messages, making it easier for attackers to deceive employees or customers.

Fraudulent Transactions

- Generative AI applications in Business using Advanced LLMs could be used to manipulate transaction data or create false documentation, making fraud detection more challenging

Operational Risks

Model Inaccuracy

- Inaccurate predictions or decisions made by LLMs can lead to financial losses.

- For example, incorrect risk assessments or credit evaluations can impact the financial health of an institution.

Overreliance on Automation without survilliance

- Unguarded dependence on LLMs for critical financial decisions without adequate human oversight can result in significant operational risks.

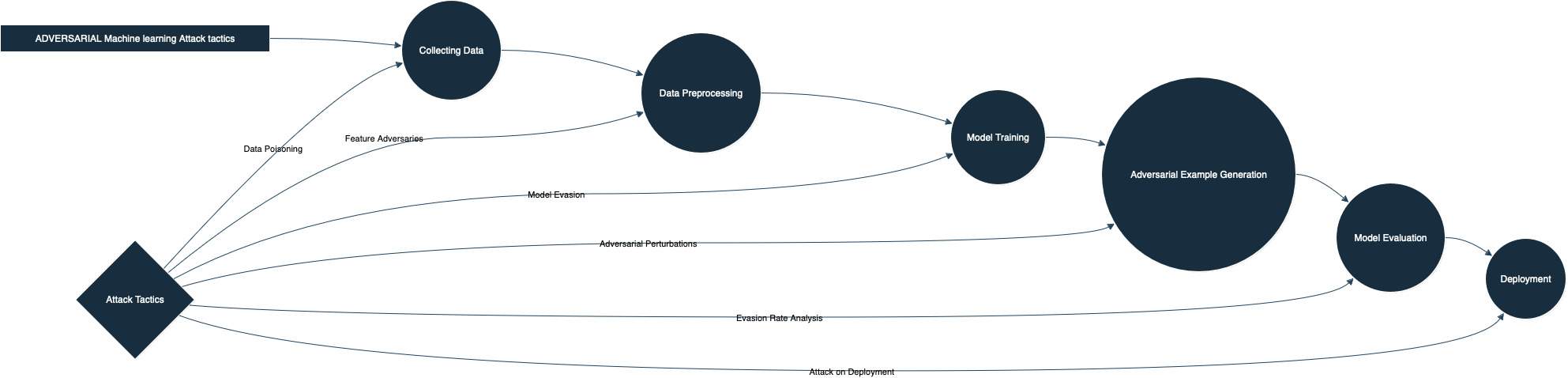

Adversarial Attacks

Adversarial Inputs

- Generative AI applications in Business can be subjected to adversarial inputs. Malicious actors can craft inputs designed to confuse or mislead LLMs, potentially leading to incorrect outputs or actions that can be exploited.

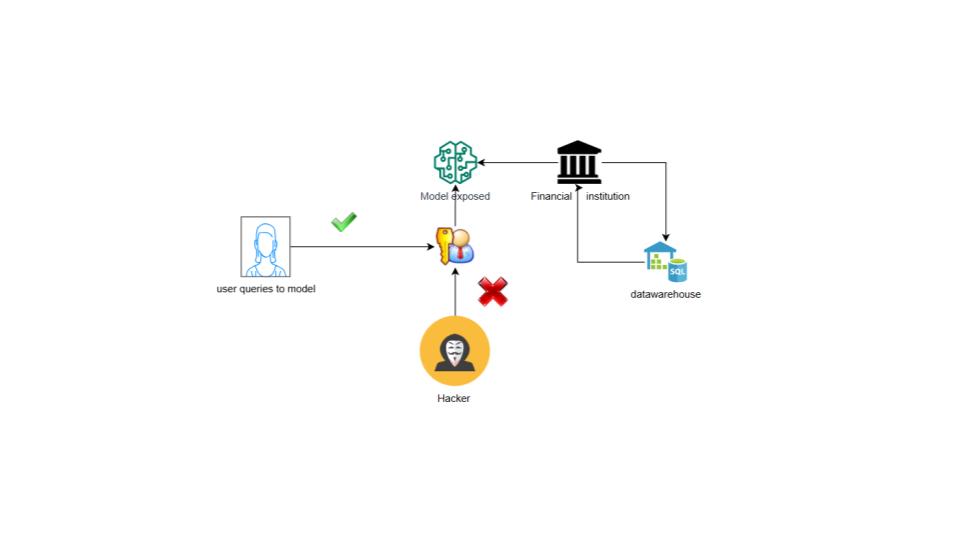

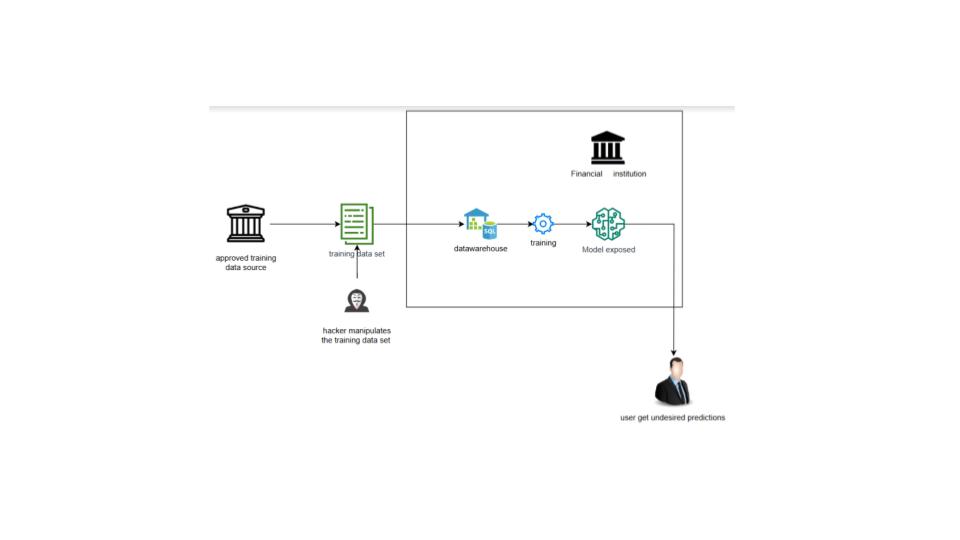

Model Poisoning

- Attackers can manipulate the training data or the model itself to introduce vulnerabilities or backdoors.

Attack cases

- Exfiltration via Inference API

- Exfiltration Cyber means

- LLM Meta Prompt extraction

- LLM Data leakage

- Craft Adversarial Data

- Denial of ML service

- Spamming with Chaff Data

- Erode ML Model integrity

- Prompt injection

- Plugin Compromise

- Jailbreak

- Backdoor ML Model

- Poision training data

- Inference API Access

- ML supply chain compromise

- Sensitive Information Disclosure

- Supply Chain Vulnerabilities

- Denial of Service

- Insecured Output Handling

- Insecure API/plugin/Agent

- Excessive API/plugin/Agent Permissions

Regulatory Compliance

Non-Compliance with Regulations

- Financial institutions using Generative AI applications in Business must comply with various regulations related to data privacy, fairness, and transparency.

- Generative AI applications in Business must be designed and implemented in ways that meet these regulatory requirements.

Audit and Explainability

- Ensuring that Generative AI applications in Business using LLMs’ decisions can be audited and explained is crucial for regulatory compliance. Lack of transparency can pose significant challenges.

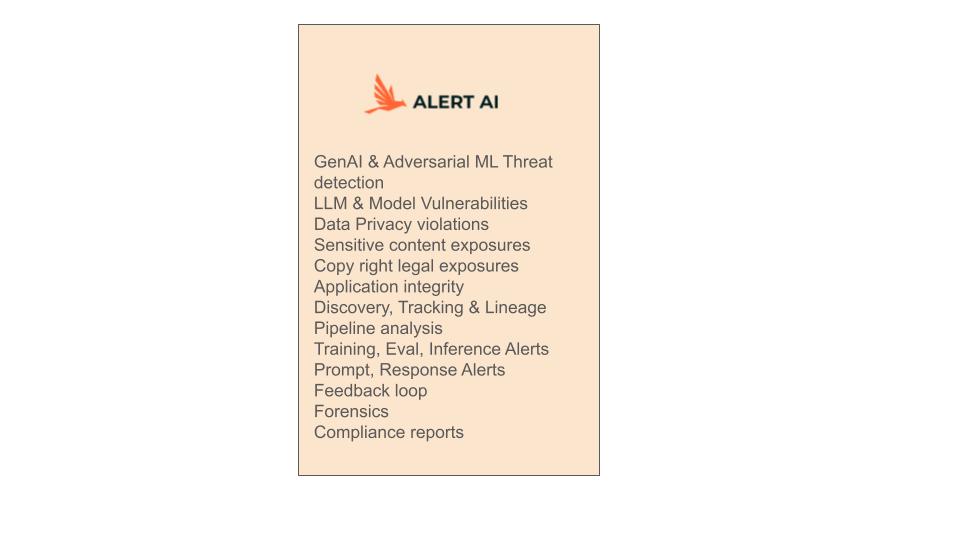

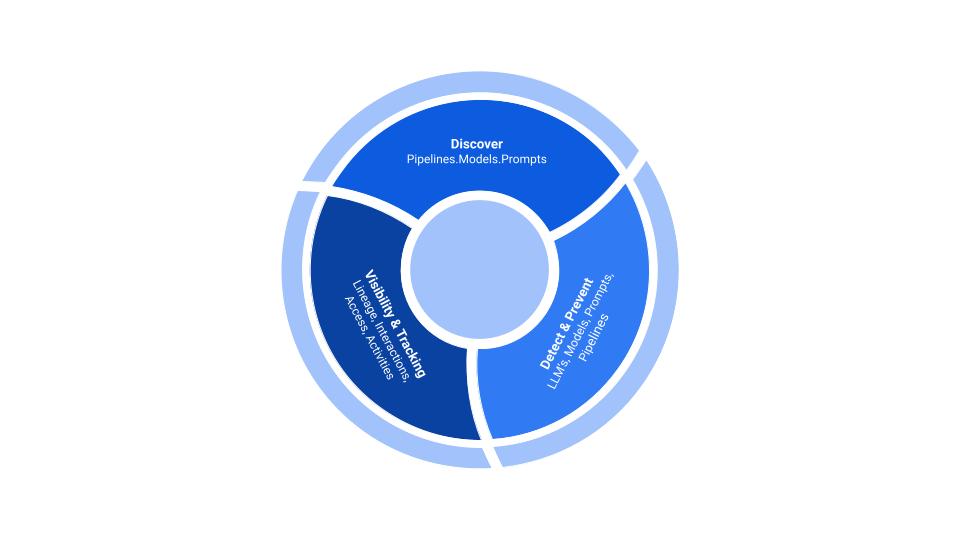

Why Alert AI?

Alert AI provides end-to-end, interoperable, easy to deploy and manage security integration to address security and risks in Generative AI & AI applications.

Alert AI help Organizations to Enhance, Optimize, Manage security of Generative AI applications in Business workflows.

About Alert AI

- Easy to deploy and manage Generative AI application security integration

- Protection for Generative AI attack vector and vulnerabilities

- Intelligence loss prevention

- Domain-specific security guardrails

- Eliminates Security blind spots of Gen AI Application for InfoSec team

- Seamless integration with Gen AI service platforms AWS Bedrock, Azure OpenAI, NVidia DGX, Google Vertex AI. Industry leading Foundation models

- AWS Bedrock, Azure Gen AI, Nvidia DGX, Google

- and Industry leading Foundation Models AWS Amazon Titan, Anthropic Claude, Nvidia Nemotron, Cohere Command, Google Gemini, IBM Granite,Microsoft Phi, Mistral AI, OpenAI GPT-4

Coverage and Features

- Alerts and Threat detection in AI footprint

- LLM & Model Vulnerabilities Alerts

- Adversarial ML Alerts

- Prompt, response security and Usage Alerts

- Sensitive content detection Alerts

- Privacy, Copyright and Legal Alerts

- AI application Integrity Threats Detection

- Training, Evaluation, Inference Alerts

- AI visibility, Tracking & Lineage Analysis Alerts

- Pipeline analytics Alerts

- Feedback loop

- AI Forensics

- Compliance Reports

- Domain specific LLM security guardrails

Generative AI security guardrails

Danger, warning, caution, notices, recommendations

Enhance, Optimize, Manage security of generative AI applications using Alert AI services.

At ALERT AI, We are developing integrations and models to secure Generative AI & AI workflows in Business applications, and domain specific security guardrails. With over 100+ integrations and thousands of detections, the easy to deploy and manage security platform seamlessly integrates AI workflows across Business applications and environments.

At ALERT AI, We are developing integrations and models to secure Generative AI & AI workflows in Business applications, and domain specific security guardrails. With over 100+ integrations and thousands of detections, the easy to deploy and manage security platform seamlessly integrates AI workflows across Business applications and environments.

The New Smoke Screen, in the Organization and AI Security Posture

Generative AI introduce a host of new Attack vectors and threats escape current firewalls.

Security solutions like Alert AI can help with current pain point of Breaking the glass ceiling, bridging link between

MLops and Information Security operations teams. Having right tools in hands …

Information security engineers and teams can enforce right Security Posture for AI development across the Organizations and see through that smoke screen early-on, spot issues, before production.

Enhance, Optimize, Manage

Enhance, Optimize, Manage security of Generative AI applications using Alert AI security integration.

Alert AI seamlessly integrates with Generative AI platform of your choice.

Alert AI enables end-to-end security and privacy, intelligence security, detects vulnerabilities, application integrity risks with domain-specific security guardrails for Generative AI applications in Business workflows.

AI Workflow

Develop Automated report generation of Operation insights using

- Generative AI managed services like Amazon Bedrock, Azure OpenAI, Nvidia DGX, Vertex AI to experiment and evaluate industry leading FMs.

- Customization with data, fine-tuning and Retrieval Augmented Generation (RAG) and agents that execute tasks using organizations data sources.

Security Optimization using

- Alert AI integration.

- Enhance, Optimize, Manage Generative AI application security using Alert AI